Dr. William Davis, Milwaukee-based “preventive cardiologist” and Medical Director of the Track Your Plaque program, argues in his new book, Wheat Belly: Lose the Wheat, Lose the Weight, and Find Your Path Back to Health, that “somewhere along the way during wheat's history, perhaps five thousand years ago but more likely fifty years ago, wheat changed.” And not for the better.

William Davis, MD, hosted at The Wheat Belly Blog

According to Dr. Davis, the introduction of mutant, high-yield dwarf wheat in the 1960s and the misguided national crusade against fat and cholesterol that caught steam in the 1980s have conspired together as a disastrous duo to produce an epidemic of obesity and heart disease, leaving not even the contours of our skin or the hairs on our heads untouched. Indeed, Dr. Davis argues, this mutant monster we call wheat is day by day acidifying our bones, crinkling our skin, turning our blood vessels into sugar cubes, turning our faces into bagels, and turning our brains into mush.

Dr. Davis's central thesis is that modern wheat is uniquely able to spike our blood sugar with its high-glycemic carbohydrate and to stimulate our appetite with the drug-like digestive byproducts of its gluten proteins. As a result, we get fat. And not just any fat — belly fat. “I'd go so far as saying,” he writes, “that overly enthusiastic wheat consumption is the main cause of the obesity and diabetes crisis in the United States” (p. 56, his italics). Abdominal obesity brings home a host of inflammatory factors to roost, causing insulin resistance and the production of small, dense LDL particles prone to oxidation and glycation. The high blood sugar and insulin levels further contribute to acne, hair loss, and the formation of advanced glycation endproducts (AGEs) that accelerate the aging process.

Beyond these effects, Dr. Davis contends, both those who suffer from celiac and some as yet unknown number of people who don't may develop all sorts of physical and psychological problems caused by immune reactions to wheat gluten. The high content of sulfur amino acids leads to overproduction of sulfuric acid, he argues, leaching minerals from our bones. In short, this so-called “food” is a nightmare responsible for virtually every degenerative disease under the sun.

Although these arguments often lack the “smoking gun” that would satisfy a skeptical scientist, Dr. Davis proposes a number of interesting hypotheses to explain why his patients have consistently improved their health simply by cutting out all of the processed junk food in their diets, including processed junk food made from “healthy whole grains,” and replacing this pseudo-food with unlimited amounts of real foods like grass-fed meats, eggs, cheese, vegetables, nuts, seeds, and traditional fats and oils, supplemented with small amounts of fruits, legumes, non-gluten grains, and fermented soy. Although I think these recommendations could be improved by taking a more liberal attitude toward carbohydrates for those who can tolerate them, I think many people eating the modern, industrial diet are likely to improve their health on this eating plan.

Dr. Davis promotes the plan with a casual, conversational, and often humorous tone, which is likely to make the book a great success. As such, I believe it deserves our attention and can serve as a useful starting point for discussing the potential contribution of wheat products to the modern epidemics of obesity, diabetes, and cardiovascular disease.

Wheat Then and Now — The Rise of Modern Dwarf Wheat

Although Dr. Davis provides no evidence linking modern degenerative diseases directly to the development of high-yield dwarf wheat, his hypothesis that the wheat of the last 50 years is quite different in its effects from the wheat of ages past is attractive both because of the ease with which we can reconcile it to several important pieces of scientific evidence and because of the ease with which this message can be spread to many communities who value the role that wheat has played in their ethnic and religious histories, a topic that Dr. Davis addresses with the sensitivity of a true gentleman.

The Work of Weston Price and Sir Robert McCarrison

Weston Price, DDS, courtesy of Price-Pottenger Nutrition Foundation

This hypothesis is, first and foremost, much easier to reconcile to the findings of Weston Price and Sir Robert McCarrison than competing hypotheses that target all forms of wheat with equal vigor. In his epic work, Nutrition and Physical Degeneration (1), Price documented the physical degeneration that consistently followed the introduction of “the displacing foods of modern commerce,” which he identified as white flour, sugar, polished rice, syrups, jams, canned goods, and vegetable oils. Price nevertheless held the health-promoting value of whole wheat in high esteem based on several lines of observational, experimental, and clinical evidence.

“The most physically perfect people in northern India,” Price wrote, “are probably the Pathans who live on dairy products largely in the form of soured curd, together with wheat and vegetables. The people are very tall and are free of tooth decay” (ref. 1, p. 291).

Robert McCarrison, MD, D Sc., Hon. LL.D., from The Wheel of Health.

Image from The Wheel of Health.

Price did not record traveling to India himself, but he was aware of the work of Sir Robert McCarrison (ref 1, p. 479), who had studied many different populations in India with differing levels of health. McCarrison held the wheat-eating Pathans and Sikhs in high esteem, but reserved a special mention for the wheat-eating Hunzas, among whom he had spent seven years of his life. Although McCarrison noted that the health of the Hunzas declined in the later years of World War I, and although later visitors would describe quite poor health among the Hunzas after World War II, McCarrison claimed that in earlier years they were marked by a great degree of physical perfection (ref. 2, p. 9):

My own experience provides an example of a race, unsurpassed in perfection of physique and in freedom from disease in general, whose sole food consists to this day of grains, vegetables, and fruits, with a certain amount of milk and butter, and goat's meat only on feast days. I refer to the people of the State of Hunza, situated in the extreme northernmost point of India. So limited is the land available for cultivation that they can keep little livestock other than goats, which browse on the hills, while the food-supply is so restricted that the people, as a rule, do not keep dogs. They have, in addition to grains — wheat, barley, and maize — an abundant crop of apricots. These they dry in the sun and use very largely in their food.

Amongst these people the span of life is extraordinarily long; and such service as I was able to render them during some seven years spent in their midst was confined chiefly to the treatment of accidental lesions, the removal of senile cataract, plastic operations for granular eyelids, or the treatment of maladies wholly unconnected with food-supply. Appendicitis, so common in Europe, was unknown. When the severe nature of the winter in that part of the Himalayas is considered, and the fact that their housing accomodation and conservancy arrangements are of the most primitive, it becomes obvious that the enforced restriction to the unsophisticated foodstuffs of nature is compatible with long life, continued vigour, and perfect physique.

McCarrison performed extensive laboratory experiments attempting to replicate the health and disease seen in different Indian groups and in the Europeans of his day by feeding rodents diets designed to mimic those he encountered in each population. He concluded that diets based mostly on wheat or rice would cause deficiency diseases, but that more balanced diets containing wheat along with other foods, in conjunction with proper hygiene and comfort, provided his rats with the best health.

In both humans and animals, excessive reliance on rice, but not wheat, was associated with B vitamin deficiencies and the neurological diseases that invariably come with them. He concluded, “The prescription of ancient Indian hakims — ‘stop eating rice and take a diet of whole wheat and milk' — can hardly be bettered.” Excessive reliance on wheat promoted a wide variety of infectious diseases and an unknown factor in wheat germ promoted soft tissue calcification, but all these effects were easily overcome by providing adequate sources of vitamin A and phosphorus. Diets modeled after that of the Sikhs, which contained wheat but also dairy, legumes, vegetables, meat, bones, and fat, produced excellent health in McCarrison's rats (ref. 3, p. 269; 274-5).

Image from The Wheel of Health.

Image from The Wheel of Health.

Weston Price had his own encounters with healthy wheat-eaters. As Research Director for the American Dental Association, he had already established situations in which cavities would spontaneously heal through the deposition of secondary dentin. In Dental Infections, Oral and Systemic (4), Price described cavities transiently appearing in women during pregnancy and spontaneously healing after they gave birth.

In Nutrition and Physical Degeneration (ref 1, p. 85), he described a Canadian Indian reservation where 4,700 Native Americans from various nations including Mohawks, Onondagas, Cayugas, Senecas, Oneidas, Delawares, and Tuscaroras lived under highly modernized conditions. On the reservation, there was a training school called the Mohawk Institute that was home to some 160 children. Students there consumed dairy from the reservation's own herd, whole wheat bread, fresh vegetables, and a small amount of sugar and white flour. Seventeen percent of the teeth he examined showed evidence of past cavities, but all of them had healed while on the training school's diet. He discovered not a single active cavity within the confines of the institute.

Price performed laboratory experiments in which rats fed white flour developed tooth decay, infertility, patchy hair growth, and poor body weight, while those fed freshly ground whole wheat were healthy (ref 1, p. 278). In his clinical practice, Price used rolls made from freshly ground whole wheat, spread with high-vitamin butter obtained from cows consuming wheat grass grown on high-quality soil. He combined these rolls with cod liver oil, butter oil, milk, cooked fruit, animal organs, fish, and stews made from meat, marrow, and vegetables. This wheat-containing diet led to the reversal of tooth decay in his own practice, just as the wheat-containing diet of the Mohawk Institute had apparently done for the institute's students (ref 1, p. 429).

Price also cured a boy of seizures in a single day by switching his skim milk to whole milk, switching his white flour products to gruel made from freshly ground whole wheat, and providing him with supplemental high-vitamin butter oil (ref 1, p. 271). Price believed that these changes had cured the boy by normalizing his blood levels of calcium.

If, in fact, modern dwarf wheat differs in its biological effects from the wheat of 100 years ago, it becomes much easier to understand how wheat could have seemed so health-promoting to Drs. Price and McCarrison and yet can seem so destructive to Dr. Davis.

Dwarf Wheat Is Deficient in Minerals

If Price and McCarrison were alive today, they would almost certainly agree with Davis on one thing: modern dwarf and semi-dwarf wheat should not be consumed as a major portion of the diet, even as a “healthy whole grain.” The overwhelming emphasis of both Price and McCarrison was on the nutrient density and the nutrient balance of the diet. Recent research has shown that whole wheat has become much poorer in nutrients since the Green Revolution gave us these high-yield, hybrid mutants.

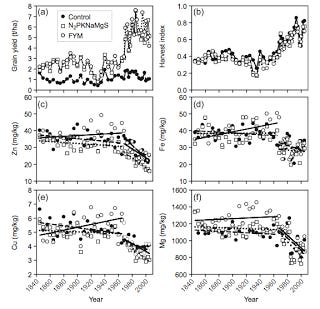

The Broadbalk Wheat Experiment has provided us with strong evidence of this decline in nutrient content (5). Since the beginning of the experiment in 1843, generations of investigators have grown sixteen different cultivars of wheat in parallel plots using different types of fertilizer and different types of agronomic treatments such as crop rotation, herbicides, and fungicides. At the end of each harvest, they've oven-dried samples of grain and straw, and air-dried samples of soil. A group of researchers recently examined the trend in mineral content over time, and their findings were rather disturbing:

You may want to click on the picture to enlarge it. In the top two panels, we see that yield suddenly began increasing in 1968, when the first short-straw, “semi-dwarf” cultivar was introduced. Efforts to put an end to world hunger beginning in the 1940s had focused on increasing grain yields by drowning the plants in nitrogen-rich fertilizer. This posed a practical problem for wheat: the harvested portion would grow so large that the plant would become top-heavy and distort the stalk, killing the plant. Dwarf and semi-dwarf wheat provided a solution: since the stalks are shorter and stronger, these varieties can better hold up heavy seed heads. In the top right panel, we see the “harvest index” increasing after 1968, which is the ratio of the harvested grain to the total size of the plant. In the top left panel, we see that this led to increased yield for wheat grown with farmyard manure or chemically defined fertilizer, but not for wheat grown in control plots.

In the bottom four panels, we see that the introduction of semi-dwarf wheat coincided with sizable declines in zinc, iron, copper, and magnesium content. The authors stated that they found similar trends for phosphorus, manganese, sulfur, and calcium. Even for wheat grown in parallel during the same years, regardless of fertilizer, semi-dwarf whole wheat had 18-29 percent lower mineral content than traditional whole wheat. The phytate content didn't decline as much as the mineral content, so the minerals in semi-dwarf whole wheat are not only fewer and far between but probably less bioavailable as well.

Thus, Price and McCarrison would likely have called for a return to heritage varieties of wheat on the basis of nutrient density alone.

Wheat Intake Is Lower Today Than in the Days of Yore

Dr. Davis's specific targeting of modern dwarf wheat also makes it easier to reconcile his wheat-centric view of obesity to the historical trends in American wheat consumption. Dr. Stephan Guyenet sent me an interesting USDA briefing written by Gary Vocke on the consumption of wheat over the last two centuries (6). Here is a graph showing the change over time:

Like Dr. Davis, Vocke credits the campaign against animal fat and cholesterol for the increase in wheat consumption that began in the 1970s, and the interest in low-carbohydrate diets for the leveling off that occurred after 1997. But we can also see that wheat consumption was much greater in the nineteenth century. These data are especially strong from 1935 onward when the Economic Research Service began monitoring the trend, and we can see that even in 1935 wheat consumption was higher than it is today.

The nineteenth century ushered in not only a greater availability of wheat to people living in regions where it didn't grow well, but also the transition to hard wheat, whose total protein and gluten content is higher than that of soft wheat. The latter decades of that century also ushered in the refining process, which increases the gluten content of flour by removing the germ and concentrating the endosperm. The twentieth century, by contrast, ushered in more sedentary lifestyles that led to a decrease in total food consumption, as well as a more diverse diet as Americans became wealthier and more knowledgeable about nutrition. Both of these factors caused wheat consumption to decline.

If wheat is responsible for the post-1970s obesity epidemic, why didn't it cause an epidemic of even greater proportions a hundred years earlier? It would seem that either wheat itself, the way it is processed, the way we mix it with other foods, or our sensitivity to it has changed. Dr. Davis's proposal that “50 years ago, wheat changed” is one of several explanations that make the notion of a wheat-induced epidemic of obesity much easier to entertain.

The Incidence of Celiac Disease Is Increasing

One of the most intriguing pieces of circumstantial evidence that Dr. Davis presents in support of his crusade against modern dwarf wheat is the rising incidence of celiac disease (Wheat Belly, p. 79-82). A recent study compared frozen serum samples collected between 1948 and 1952 to serum samples collected at the time of analysis (7). After verifying the stability of IgA antibodies in the old samples, the investigators concluded that celiac disease is over four times more prevalent today than it was 60 years ago. A similar study suggested that the incidence of celiac disease in the Finnish population doubled from one to two percent between 1980 and 2000 (8).

There is some preliminary evidence that the proteins that contribute to celiac disease are present in greater concentrations in more recent strains of wheat (9). Dr. Davis's hypothesis that increased gluten exposure from modern dwarf and semi-dwarf wheat has contributed to the increase in celiac disease thus seems plausible.

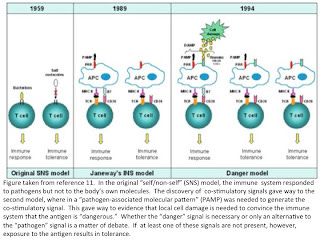

Nevertheless, it would be wise for us to consider other hypotheses, as there is rarely if ever any biological phenomenon that is caused by one thing and one thing alone. Our current understanding of molecular immunology suggests that for our immune systems to mount a response to a protein fragment, in addition to simple exposure to the protein and the genetic capacity to recognize it, our immune cells must also recognize either a “pathogen” signal from an invading enemy or a “danger signal” released from other cells undergoing stress or necrosis (death). Some scientists even argue that the stressed or dying cells provide the overriding signal rather than the pathogen. In the absence of any evidence of “danger,” exposure to a given protein fragment induces tolerance rather than an immune response (10, 11). This would suggest that pre-existing infection or dysbiosis may be needed to prime the immune system to respond to gluten.

In addition to the need for a “pathogen” or “danger” signal, there is yet another reason to believe that pre-existing infection or dysbiosis is a necessary pre-requisite for celiac disease: in order for the T cell to properly recognize the problematic fragment of the gluten protein, this fragment must first be deamidated by an enzyme called tissue transglutaminase (12). “Deamidation” involves the removal of nitrogen from certain amino acids to produce their acidic counterparts, such as the removal of nitrogen from glutamine to produce glutamate. Our cells only release tissue transglutaminase when they are attempting to recover from tissue damage.

Lo and behold, what has the food industry decided to do to the wheat gluten it adds to processed junk food in the last several decades? Deamidate it! Yesiree, sometimes by chemical treatment (13), and sometimes by treating it with . . . drumroll . . . tissue transglutaminase (14)!

The close of World War II, moreover, ushered in the Age of the Antibiotic, when massive Pentagon funding for penicillin all but obliterated the British and American emphasis on vitamin A in cod liver oil as our major protection against infectious diseases, which had dominated the pre-war era (15). This likely led to an epidemic of antibiotic-induced intestinal dysbiosis, which might have contributed to the intestinal inflammation needed to mount a response to gluten. It also likely led to a dramatic decline in the intake of vitamin A, which McCarrison had identified as one of the major factors missing in a wheat-heavy diet.

It therefore seems that Dr. Davis is correct that wheat has changed, but there are likely many other pieces to the puzzle.Wheat and the Archeological Record

Dr. Davis concludes Wheat Belly (p. 225-8) by leaving the reader with several questions:

Should we thank wheat for propelling civilization forward, or was agriculture “in many ways a catastrophe from which we have never recovered,” as Jared Diamond has suggested?

If modern grains are at the root of most diseases, how will we feed the world?

To what extent can a return to heritage varieties of wheat such as einkorn or emmer reverse the problems that modern wheat has caused?

“I won't pretend to have all the answers,” he writes. “In fact, it may be decades before all these questions can be adequately answered.” Without definitively concluding whether agriculture and grain consumption are compatible with human health, he argues that our obsession with modern dwarf wheat has taken the agricultural paradigm to the extreme by minimizing variety and maximizing the “convenience, abundance, and inexpensive accessibility . . . to a degree inconceivable even a century ago.”

I agree that these questions remain open. We would expect the adaptation to agriculture to have required a learning curve as humans developed ways to cope with increased population density and the increased burden of infectious diseases that it produced, to learn how best to prepare agricultural products, and to learn how best to combine them with other foods. The existence of many healthy agricultural populations suggests that agriculture is indeed compatible with human health, but whether it constitutes a path by which we can achieve the optimal diet may well remain a mystery to many unto perpetuity.

Got Processed Junk in That “Whole Wheat” Trunk?

Dr. Davis and I approach these questions from two different but complementary perspectives. I imagine that as a clinician Dr. Davis is concerned first and foremost with what he can do to bring immediate help to his patients. As a researcher, I am concerned with slicing and dicing the evidence in as many ways as possible to sort out what questions remain and how we can best attempt to answer them. Ultimately, clinicians and researchers both want to put useful research into clinical practice to help people. From this point forward in my review, I'm going to put my “researcher hat” on and emphasize alternative interpretations of the data that Dr. Davis has presented.

Wheat Belly doesn't include a map of a modern grocery store, but I imagine if Dr. Davis had chosen to include one, it would look something like this:

If I were to draw a map of a grocery store, it would look something like this:

Dr. Davis details the sheer quantity of foods he removes from his patients' diets under the umbrella of “wheat” (p. 13):

In fact, apart from the detergent and soap aisle, there's barely a shelf that doesn't contain wheat products. Can you blame Americans if they've allowed wheat to dominate their diets? After all, it's in practically everything.

He warns the reader not to exchange “one undesirable group of foods with another undesirable group,” and thus insists that “wheat” should be replaced with “vegetables, nuts, meats, eggs, avocados, olives, cheese — i.e., real food” (p. 193). One of the side benefits of removing wheat is that “you will simultaneously experience reduced exposure to sucrose, high-fructose corn syrup, artificial food colorings and flavors, cornstarch, and the list of unpronounceables on the product label” (p. 195).

Even his patient Larry, who focused his diet on “healthy whole grains,” was eating things like “whole wheat pretzels and these multigrain crackers.” “With four teenagers in the house,” Dr. Davis reports, “clearing the shelves of all things wheat was quite a task, but he and his wife did it” (p. 52-53). Since “wheat” is only one thing and should therefore take about thirty seconds to remove from the home, it would seem that Larry's family must have had an awful lot of processed junk made from “healthy whole grains” in their cabinets.

Wheat Belly contains many stories of patients who reversed a wide variety of health problems after removing wheat from their diets, invariably accompanied by weight loss, in at least one case reaching the miraculous rate of ten pounds in fourteen days (p. 69). Dr. Davis partly blames the high glycemic index of wheat, but dedicates chapter four to the argument that wheat gluten breaks down during digestion into addictive, drug-like compounds called “exorphins” that activate our opiate receptors, causing us to overeat.

Consider this statement from page 49:

In lab animals, administration of naloxone blocks the binding of wheat exorphins to the morphine receptor of brain cells. Yes, opiate-blocking naloxone prevents the binding of wheat-derived exorphins to the brain. The very same drug that turns off the heroin in a drug-abusing addict also blocks the effects of wheat exorphins.

The authors of the study he references for this statement (16) added the exorphins directly to homogenized brain cells and concluded that “it seems pertinent to ask whether these findings have physiological significance” which they would investigate in the future using “whole animal experiments.” By 2003, exorphins had also been found in milk, meat, and rice, and the only biological effect that had been demonstrated for gluten exorphins in humans was a slowing of intestinal transit time (17).

Preliminary evidence had even suggested that a major protein found abundantly in all green plant leaves yields exorphins upon digestion (17). More recent research has shown that epicatechin, found in dark chocolate and green tea, acts as an exorphin in mice (18), and the possible interactions between the flavonoids found in medicinal plants and opiate receptors is an area of active research (19). Food compounds that interact with the opioid system may thus turn out to be ubiquitous. Whether they are absorbed and act directly on our brains to produce drug-like effects that can be blocked with naloxone is another question entirely.

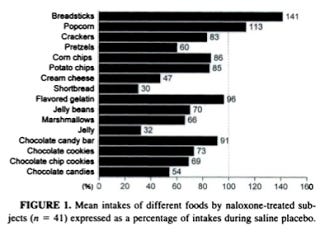

Davis claims that if you “block the euphoric reward of wheat” with naloxone, “calorie intake goes down, since wheat no longer generates the favorable feelings that encourage repetitive consumption,” and that the effect of naloxone “seems particularly specific to wheat” (p. 51). In support of this argument, he cites two studies (20, 21).

It is instructive to first consider their titles: “Naloxone Reduces Food Intake in Humans,” and “Naloxone, an Opiate Blocker, Reduces the Consumption of Sweet High-Fat Foods in Obese and Lean Female Binge Eaters.” So far, nothing about wheat. In fact, the word “wheat” does not occur in either paper. The first study showed that naloxone decreased the intake of protein and fat, but not carbohydrate. Here are the results of the second study:

Here we see that naloxone increased the intake of breadsticks, which, last I knew, were made of wheat. Sure, it was pretty effective in blocking the consumption of a number of wheat-containing foods, but it would be difficult to argue that it decreased the intake of jelly beans and cream cheese simply because these foods were haunted by the ghost of wheat. The authors concluded that “naloxone reduced the consumption of foods that were rich in fat, sugar, or both.” These effects of naloxone, moreover, were specific to binge eaters.

Dr. Stephan Guyenet's expertise is in neurobiology and he has recently been reviewing evidence on his blog, Whole Health Source, suggesting that the palatability of a food, regardless of its exorphin content, is a major determinant of the food's interaction with the opioid system (22). As Dr. Davis acknolwedges (p. 60), food manufacturers go to great lengths to maximize the addictive properties of processed foods. If it were as simple as ensuring they were made of wheat, they would hardly need to pay “flavorists” to achieve this goal.

The fact that food industry giants design these foods to be addictive may be the simplest explanation of why in some people they are, in fact, addictive.

Carbs — How Low Can You Go?

Both Drs. Price and McCarrison recognized that nutrient-poor diets tend to contain an excess of carbohydrate. Price advocated “reducing the carbohydrate intake to a normal level as supplied by natural foods” (ref 1, p. 430). McCarrison noted that as one traveled from the nothern parts of India to the southern, one witnessed a gradual decline in the nutrient-density of the diet in conjunction with “a gradual decline in stature, body-weight, stamina, and efficiency of the people” (ref 3, p. 268). The nutritional deficiencies in southern India, he wrote, were associated with “as a rule, an imbalance of the diet with respect to proximate principles; such imbalance usually takes the form of excessive richness of the food in carbohydrate” (ref 3, p. 271). Yet neither of these authors sought to banish carbohydrate, starch, or sugar from the diet altogether, nor did they believe that an excess of carbohydrate per se was the driving factor in any of the diseases they were trying to cure.

Dr. Davis developed his program after discovering that he himself was a diabetic (Wheat Belly, p. 8) and while devising a strategy to help his diabetes-prone patients control their blood sugar (p. 9). It makes sense, then, that he would focus his efforts on regulating blood sugar, but the glycemic index features so prominently into the Wheat Belly plan that Davis advises all readers, regardless of their metabolic status, that whole wheat bread is as bad or worse than drinking soda or eating candy bars (p. 33), that they should avoid legumes and potatoes (p. 205), and that they can consume as many nuts as they want but should never consume more than eight to ten blueberries or two strawberries at a time (p. 207).

I never count blueberries, but I suspect a small snack for me is closer to 200 blueberries than to ten. Am I on my way to obesity and diabetes? I doubt it.

Dr. Davis argues that carbohydrate-rich foods promote blood sugar swings, leading to food cravings, and promote insulin spikes, leading to fat storage (p. 35). But there is no evidence that minor fluctuations of blood sugar within the normoglycemic range cause harm, and a little bit of fat will nearly flatten the glycemic index of a carbohydrate-rich food in healthy people (23). Whether insulin makes people fat is currently a matter of vigorous debate (24), and Wheat Belly would have benefited if Dr. Davis had presented this relationship as a hypothesis rather than stating it as a simple matter of fact.

Davis acknowledges that “foods made with cornstarch, rice starch, potato starch, and tapioca starch are among the few foods that increase blood sugar even more than wheat products” (p. 72), but he leaves us with no explanation of why the billions of people the world over who live largely on rice, potato, cassava, corn, or similar starches are not fat. Davis presents no evidence that the wheat of 100 years ago contained meaningfully less carbohydrate than today's wheat, and Americans consumed more of it then than now. Yet, as he acknowledges, if you were to “hold up a picture of ten random Americans against a picture of ten Americans from the early twentieth century, or any preceding century where photographs are available . . . you'd see the stark contrast: Americans are now fat” (p. 58).

As I noted in my post on dietary dogmatism, many people report benefits from carbohydrate-restriction and others report the precise opposite. While many people are likely to improve their health by following the plan in Wheat Belly, I think an even larger audience would benefit if the book took a more liberal view of carbohydrate.

Don't Eat the Brown Amino Acids!

The chapters on sulfur amino acids (chapter 8) and advanced glycation endproducts (AGEs, chapter 9) contain a number of technical errors and would best be revised in future printings to make the book more palatable to people with backgrounds or expertises in nutritional science or the biochemistry of AGEs.

Dr. Davis claims that “wheat is among the most potent sources of sulfuric acid, yielding more sulfuric acid per gram than any meat,” and that this sulfuric acid will dissolve our bones, turning them into mush (p. 121). Since I disagree with this theory when vegans say it about meat, it seems only fair to apply the same critical analysis to claims about wheat.

Here is the table that Davis's statement is based on (25):

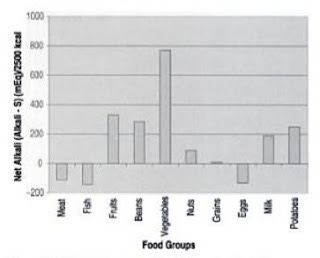

We can see first of all that it says potential sulfate. The sulfur amino acids in these foods (cysteine and methionine) are used for methylation reactions and the synthesis of glutathione and taurine. An excess over the body's needs at any given moment will likely be converted to sulfate and thereby contribute to the metabolic acid load, but one cannot determine what constitutes an “excess” simply from looking at a table.Nor can one ignore the contribution of other food components such as sodium, potassium, calcium, magnesium, chloride, and phosphorus. Even if one is to assume that all of the sulfur amino acids will generate acidic waste products, the only foods with a negative net acid load are meat, fish, and eggs, while grains are neutral (26):

Image from reference 26.

Certainly one could argue that grains could displace more alkali foods like potatoes, milk, fruits, beans, and vegetables, but to compare eating wheat to falling into a tub of battery acid is a little extreme.

There is, in any case, an elephant standing in the room that we've impolitely ignored until now. The values from the table supposedly showing that wheat generates more sulfate than meat are expressed not per gram of food, but per 100 grams of protein! Two slices of whole wheat bread yields about eight grams of protein, while a 100-gram serving of steak contains three times this amount. These authors were trying to explain why purified animal and plant proteins both increase urinary calcium. After listing many other factors in animal and plant foods besides protein that affect acid-base balance, they concluded as follows:

Animal and plant foods may have different effects on bone health, although these effects are mainly attributable to other constituents of the food and diet, not protein.

As I have written about in the past, recent evidence suggests that meat increases urinary calcium by helping us absorb more of this mineral from our food. One therefore cannot assume that an increase in urinary calcium means calcium is leaching from the bones.

Davis claims that “increased gluten intake increased urinary calcium loss” in one study “by an incredible 63 percent, along with increased markers of bone resorption — i.e., blood markers for bone weakening that lead to bone diseases such as osteoporosis” (p. 121). The reference he provides for this statement (27) was a non-randomized crossover study in which the investigators added eleven percent of calories as wheat gluten to yield a total protein intake of 27 percent. This is equivalent to the amount of gluten you would obtain by consuming seventeen pieces of whole wheat bread per day on a 2500 calorie diet, in which case you'd have to eat almost nothing but bread. Despite the enormous level of gluten and the high level of total protein, the authors considered their results for markers of bone resorption inconclusive and found that although urinary calcium and magnesium increased, there was no effect of wheat gluten on the total loss of either mineral once the amount excreted into the feces was accounted for.

Is RAGE the Spirit of the AGE?

A typical workday for me consists of performing experiments or reading scientific literature to study the metabolism of methylglyoxal, one of the two predominant sources of advanced glycation endproducts (AGEs) within the human body. As a result, I found it a little difficult to swallow most of chapter 9.

Dr. Davis claims that most AGEs enter the body from the diet or are produced by high blood sugar when glucose directly attacks proteins, and that once formed they irreversibly accumulate, serving “no lubricating or communicating functions” but simply “forming clumps of useless debris resistant to any of the body's digestive or cleansing processes” (p. 135). All they do, besides filling our tissues with gunk, is activate their receptor, RAGE, to promote oxidative stress and inflammation (p. 138).

I'm pretty sure that receptor-binding constitutes communication, but I'm not so sure that RAGE is a receptor for AGEs, as I described in my previous post, “Is the Receptor for AGEs (RAGE) Really a Receptor for AGEs?”

Most AGEs are formed from dicarbonyls, not from glucose, and dicarbonyls can come from carbohydrate, protein, or fat (in the form of ketones). Dicarbonyls are rapidly detoxified by the insulin-dependent glyoxalase system. To my knowledge, no one has demonstrated that carbohydrate restriction minimizes AGE formation, and one could easily formulate the hypothesis that the opposite is true: that by maximizing insulin signaling and minimizing ketone production, dietary carbohydrate would protect against AGE formation. To my knowledge, no one has demonstrated this either.

Most AGEs that form in the body are spontaneously reversible with half-lives between ten and twenty days. Most AGE-modified proteins are rapidly degraded, releasing free AGEs that are washed away in the urine. Even long-lived extracellular proteins such as skin collagen can be degraded by autophagy. References for these facts can be found in my recent post, “Where Do Most AGEs Come From?”

A recent editorial written by PJ Thornalley and Naila Rabani, premier experts on AGEs, suggested that these compounds may actually play a role in cell signaling (28):

Evidence of functional regulation of glyoxalase 1 (GLO1) in stress responses in microbial and mammalian metabolism suggests that methylglyoxal modification of proteins is a component of cell signalling in some circumstances.

If I were to write a book on AGEs, I'd probably write about how little we know about them.

Conclusion — The Ultimate Hubris of Modern Humans

Despite disagreeing with some of the points in Wheat Belly, I think Dr. Davis is essentially correct that modern wheat products are representatives of the type of hubris that is destroying the health of modern humans (p. 228):

It is the ultimate hubris of modern humans that we can change and manipulate the genetic code of another species to suit our needs. . . . Perhaps we can recover from this catastrophe called agriculture, but a big first step is to recognize what we've done to this thing called “wheat.”

This hubris goes far beyond simply producing a nutrition-poor product in an attempt to obtain high yields. In the first half of the twentieth century, most wheat was not only refined, stripping it of its vitamins and minerals, but was also treated with nitrogen trichloride to improve its baking qualities (29). This “agenizing” process first came under suspicion once it was realized that dogs would develop “canine hysteria” after eating products made from this flour. Scientists identified the toxic component as methionine sulfoximine. In rodents, it caused seizures. By 1950, unproven concerns that it might also be harmful to humans led the United States and United Kingdom to abandon the process. In Canada, it fell out of favor for an entirely different reason: it was highly corrosive and explosive, and thus posed an occupational hazard to the mill workers.

In our day, we still refine the flour, but bleach it with chlorine, chlorine dioxide, or potassium bromate instead. Rather than trying to reconstruct the nutritional composition of the original flour, we add a small handful of nutrients based on “current science,” including synthetic “folic acid,” which is otherwise not found in the food supply. We often chemically or enzymatically deamidate it, mimicking the inflammatory process within the intestines of a celiac patient. We then combine it into foods that have been engineered to maximize their addictive qualities so food companies can maximize their profits. Is it any wonder that “wheat products” would cause disease?

Even after reading Wheat Belly, a basic question remains unanswered for me: should we see wheat as a perpetrator or as a victim? Are we victims of wheat, or of our own hubris? Wheat Belly often presents wheat as the perpetrator, but both its second chapter and its epilogue suggest that we are the perpetrators, and our victims have been both wheat and ourselves.

While there is no doubt that there are people who should avoid wheat altogether, I am left with doubts about whether all wheat past the point of einkorn and emmer must be banished from the human diet in order to lead the majority of us back to health. McCarrison's belief that it is primarily “the enforced restriction to the unsophisticated foodstuffs of nature” combined with a healthy dose of wisdom that is required for “long life, continued vigor, and perfect physique” remains compelling to me.

But I do agree that the processed junk Dr. Davis calls “wheat” should be purged from the diet, that the development of dwarf wheat has taken its toll on us, and that we should steer clear of the packaged foods and meet Dr. Davis for a pow wow in the produce aisle.

References

1. Price WA. Nutrition and Physical Degeneration. 1945.

2. McCarrison R. Studies in Deficiency Disease. (London: Oxford Medical Publications). 1921.

3. McCarrison R. The Work of Sir Robert McCarrison. Sinclair HM, ed. (London: Faber and Faber). 1953.

4. Price WA. Dental Infections, Oral & Systemic. 1923

5. Fan MS, Zhao FJ, Fairweaterh-Tait SJ, Poulton PR, Dunham SJ, McGrath SP. Evidence of decreasing mineral density in wheat grain over the last 160 years. J Trace Elem Med Biol. 2008;22(4):315-24.

6. Vocke G. Wheat: Background. USDA ERS Briefing Rooms. https://www.ers.usda.gov/Briefing/Wheat/consumption.htm March 17, 2009. Accessed October 11, 2011.

7. Rubio-Tapia A, Kyle RA, Kaplan EL, Johnson DR, Page W, Erdtmann F, Brantner TL, Kim WR, Phelps TK, Lahr BD, Zinsmeister AR, Melton LJ 3rd, Murray JA. Increased prevalence and mortality in undiagnosed celiac disease. Gastroenterology. 2009; 137(1):88-93.

8. Lohi S, Mustalahti K, Kaukinen K, Laurila K, Collin P, Rissanen H, Lohi O, Bravi E, Gasparin M, Reunanen A, Maki M. Increasing prevalence of ceoliac disease over time. Aliment Pharmacol There. 2007; 26(9):1217-25.

9. van den Broeck HC, de Jong HC, Salentjin EM, Dekking L, Bosch D, Hamer RJ, Gilissen LJ, van der Meer IM, Smulders MJ. The presence of celiac disease epitopes in modern and old hexaploid wheat varieties: wheat breeding may have contributed to increased prevalence of celiac disease. Theor Appl Genet. 2010;121(8):1527-39.

10. Alberts B, Johnson A, Lewis J, Raff M, Roberts K, Walter P. Molecular Biology of the Cell. (New York, NY: Garland Science) 2007. Chapter 25.

11. Li J, Uetrecht JP. The danger hypothesis applied to idiosyncratic drug reactions. Handb Exp Pharmacol. 2010;(196):493-509.

12. Tjon JM, van Bergen J, Koning F. Celiac disease: how complicated can it get? Immunogenetics. 2010; 62(10:641-51.

13. Liao L, Zhao M, Ren J, Zhao H, Cui C, Hu X. Effect of acetic acid deamidation-induced modification on functional and nutrition properties and conformation of wheat gluten. J Sci Food Agric. 2010;90(3):409-17.

14. Kuraishi C, Yamazaki K, Susa Y. Transglutaminase: Its Utilization in the Food Industry. Food Rev Int. 2001;17(2):221-46.

15. Semba RD. Vitamin A as “anti-infective” therapy, 1920-1940. J Nutr. 1999;129(4):83-91.

16. Zioudrou C, Streaty RA, Klee WA. Opioid peptids derived from food proteins. The exorphins. J Biol Chem. 1979;254(7):2446-9.

17. Teschemacher H. Opioid receptor ligands derived from food proteins. Curr Pharm Des. 2003;9(16):1331-44.

18. Panneerselvam M, Tsutsumi YM, Bonds JA, Horikawa YT, Saldana M, Dalton ND, Head BP, Patel PM, Roth DM, Patel HH. Dark chocolate receptors: epicatechin-induced cardiac protection is dependent on delta-opioid receptor stimulation. Am J Physiol Heart Circ Physiol. 2010;299(5):H1604-9.

19. Katavic PL, Lamb K, Navarro H, Prisinzano TE. Flavonoids as opioid receptor ligands: identification and preliminary structure-activity relationships. J Nat Prod. 2007;70(8):1278-82.

20. Cohen MR, Cohen RM, Pickar D, Murphy DL. Naloxone reduces food intake in humans. Psychosom Med. 1985;47(2):132-8.

21. Drewnowski A, Krahn DD, Demitrack MA, Nairn K, Gosnell BA. Naloxone, an opiate blocker, reduces the consumption of sweet high-fat foods in obese and lean female binge eaters. Am J Clin Nutr. 2995;61(6):1206-12.

22. Guyenet S. The Case for the Food Reward Hypothesis of Obesity, Part II. https://wholehealthsource.blogspot.com/2011/10/case-for-food-reward-hypothesis-of_07.html. October 7, 2011. Accessed October 8, 2011.

23. Collier G, O'Dea K. The effect of coingestion of fat on the glucose, insulin, and gastric inhibitory polypeptide responses to carbohydrate and protein. Am J cline Nutr. 2983;37(6):941-4.

24. Guyenet S. Fat Tissue Insulin Sensitivity and Obesity. https://wholehealthsource.blogspot.com/2011/09/fat-tissue-insulin-sensitivity-and.html September 13, 2011. Accessed October 12, 2011.

25. Massey LK. Dietary animal and plant protein and human bone health: a whole foods approach. J Nutr. 2003;133(3):82S-865S.

26. Uribarri J, Oh MS. Electrolytes, Water, and Acid-Base Balance. In Shils ME, Shike M, Ross AC, Caballero B, Cousins RJ. Modern Nutrition in Health and Disease (Baltimore, MD: Lippincott Williams & Wilkins) p. 177.

27. Jenkins DJ, Kendall CW, Vidgen E, Augustin LS, Parker T, Faulkner D, Vieth R, Vandenbroucke AC, Josse RG. Effect of high vegetable protein diets on urinary calcium loss in middle-aged men and women. Eur J Clin Nutr. 2003;57(2):376-82.

28. Rabbani N, Thornalley PJ. The glyoxalase system — from microbial metabolism, through ageing to human disease and multi drug resistance. Semin Cell Dev Biol. 2011;22(3):261.